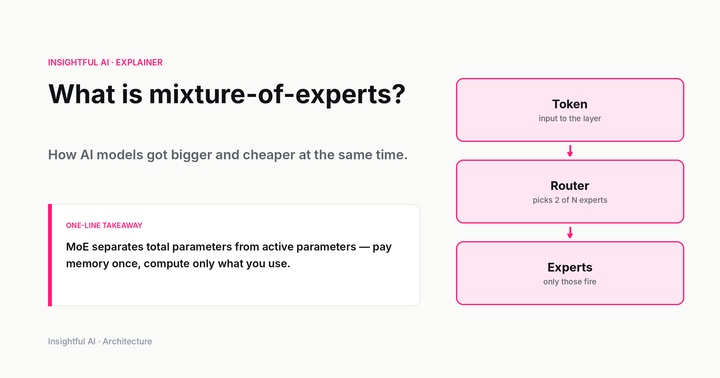

What is mixture-of-experts? The architecture behind cheaper trillion-parameter AI

Mixture-of-experts is how a 671-billion-parameter model can cost less per token than a 70-billion-parameter one. A plain-English guide to MoE, active vs total parameters, routers and experts, and why open-weights frontier labs picked the architecture first.