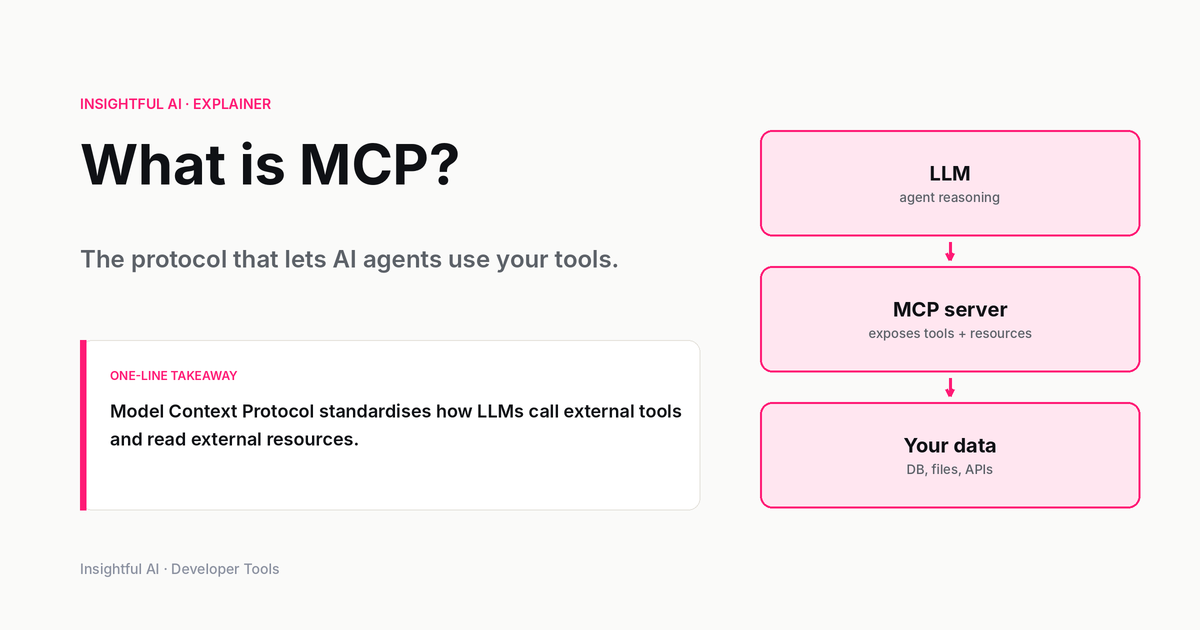

What is MCP? The protocol that lets AI agents use your tools

MCP is Anthropic's open standard for connecting AI assistants to external data and tools. Here's what it does, what it leaves to implementers, and what it changes for developers.

By Daniel Park, Insightful AI Desk

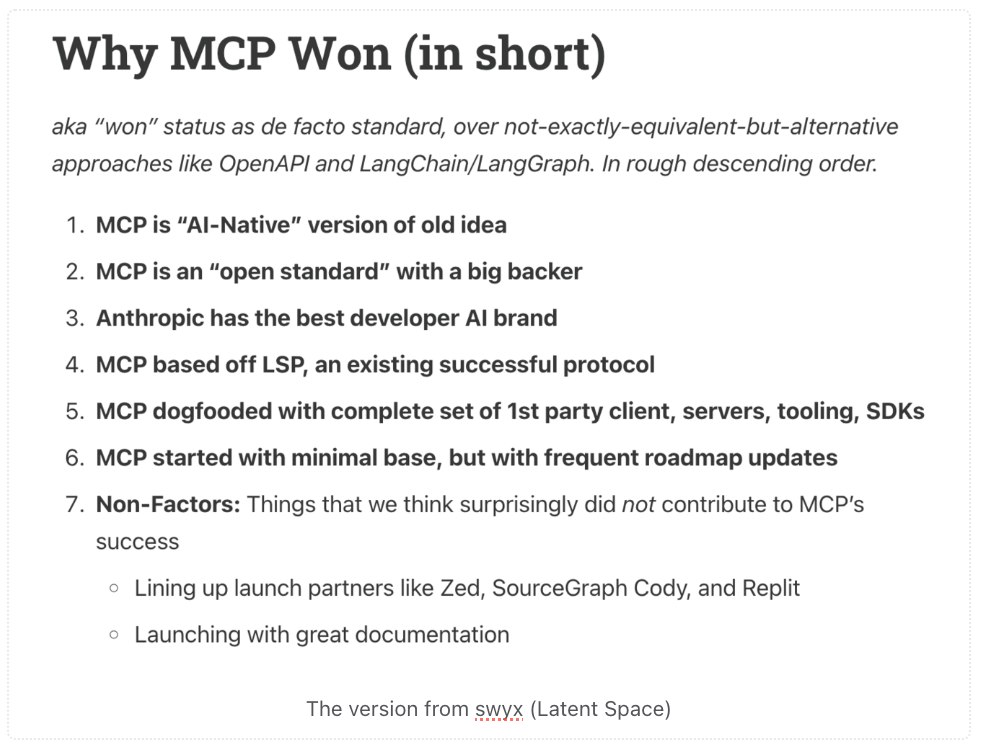

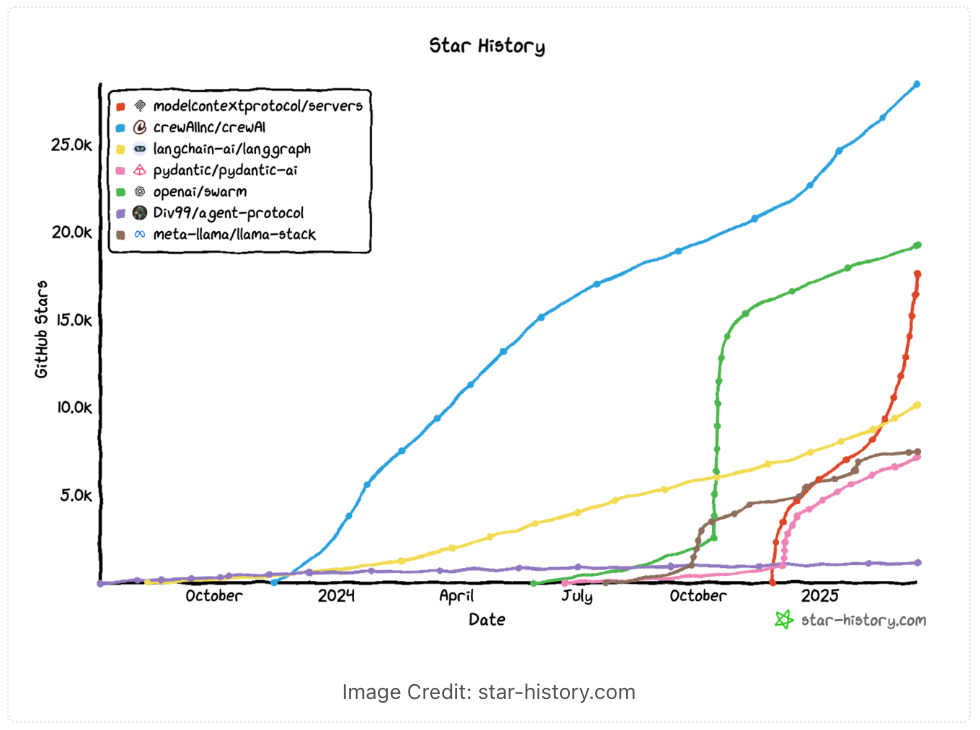

If you have been following AI developer tooling at all since late 2024, you have run into the abbreviation MCP. The Model Context Protocol began as an Anthropic announcement on 25 November 2024, was described as “a new standard for connecting AI assistants to the systems where data lives,” and has since been adopted by enough independent vendors that “does it support MCP?” has become a standard question when evaluating developer tools.

This piece walks through what the protocol actually is, what it standardises, what it leaves to implementations, and what it changes for developers building AI-powered applications.

What MCP is, in one sentence

MCP is a protocol that defines how an AI client (a chatbot, a coding assistant, an autonomous agent) can connect to one or more separate processes that expose data, tools, or context, in a uniform way that does not require custom integration code for each combination.

Before MCP, every AI client that wanted to use external tools had to invent its own way of describing what tools existed, how to invoke them, what their parameters were, and how to interpret their responses. Vendor A’s chatbot called external functions one way. Vendor B’s used a different schema. Vendor C had its own plugins manifest. A tool vendor that wanted its capability to work inside every AI assistant had to write N integrations for N assistants.

MCP is the standardisation step that collapses N integrations into 1. A tool vendor implements an MCP server. Any AI client that speaks MCP (an MCP client) can connect to that server and use its capabilities, with no further integration work on either side.

The components

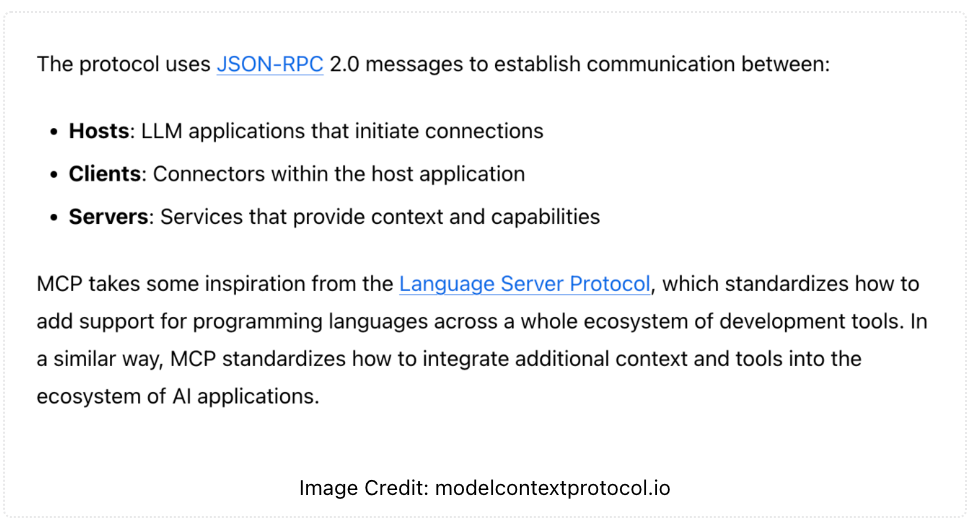

The protocol defines two roles, plus a wire format and a set of standard primitives.

MCP servers expose capabilities. A server might wrap a database, a file system, a Git repository, a JIRA project, a calendar, an internal API, or anything else. The server’s job is to describe what it offers and respond to requests from clients.

MCP clients are the AI applications that connect to those servers. A chat application, an IDE assistant, an autonomous agent: any of these can be a client. The client’s job is to discover what the server offers, decide when to call it, and feed the responses back into the model’s context.

Between the two sits a defined transport layer (typically stdio for local servers, Streamable HTTP for remote ones) and a defined set of message types based on JSON-RPC 2.0. The protocol does not prescribe what a server should do; it prescribes how a server and a client talk to each other.

Within that frame, MCP standardises three core primitives that servers can expose:

- Tools. Functions the model can invoke (read a file, query a database, send an email). Each tool exposes a name, a description, and a parameter schema. Model-controlled.

- Resources. Read-only data the server can provide on request (the contents of a file, a database row, an API response). Application-controlled.

- Prompts. Reusable prompt templates the server publishes for the client to use, often with parameters. User-controlled.

Of these, tools is the one most developers actually encounter first; resources and prompts are the structural counterweights. The protocol also defines client-side primitives like sampling (servers requesting model completions from the client), elicitation (servers requesting input from the user), and logging.

Why this matters operationally

The standardisation has three concrete effects on how developers build with AI today.

One integration per tool, not one per AI assistant. A company that builds an internal MCP server exposing its proprietary data can connect that server to Claude, to a developer’s IDE assistant, and to an internal autonomous agent, with the same code. The cost of adding an AI capability across the developer stack drops from “rebuild integration N times” to “build once, configure many times.”

Open marketplace of capabilities. Because the protocol is open, third parties have started shipping MCP servers for common services: databases, file systems, project management tools, version control. A developer who needs their AI assistant to see their GitHub issues can pull a community-maintained GitHub MCP server, configure it once, and skip the work of writing an integration.

Local-first by default. The original MCP design favoured local processes connected via stdio. This is intentionally low-ceremony: a server is just a program that speaks the protocol over standard input and output. The local-first default makes it easy to run servers that have direct, unmediated access to local files, local databases, and local tools, without exposing any of that over a network. Streamable HTTP transports exist for the cases where a remote server is appropriate, but the default is local.

The early adopters

Anthropic’s November 2024 launch blog identified two named partner companies, Block and Apollo, as having committed to integrating MCP, and four named developer-tool companies, Zed, Replit, Codeium, and Sourcegraph, as working on MCP support.

The pattern in that list is worth noting. The launch did not lead with consumer applications or large model vendors. It led with companies whose products are developer tools or whose engineering organisations rely heavily on AI in their internal workflows. That choice signals MCP’s initial centre of gravity: the developer-tooling ecosystem, not the consumer-chatbot one.

In the year since the launch the spec has spread well beyond that initial set. Community-maintained server lists run into the dozens of supported services. Multiple non-Anthropic AI clients have shipped MCP support, including major IDE integrations. The protocol has become the de facto reference for what a portable AI-tool integration looks like.

What MCP does not do

Three things the protocol explicitly does not solve are worth naming, because they show up in evaluation conversations.

It is not a model-to-model protocol. MCP defines how a model talks to external tools and data sources, not how two models talk to each other or how a model is hosted. The shape of the model API, the prompting interface, and the deployment topology are all left to other layers.

It is not an authentication or authorisation framework, although it accommodates them. The protocol assumes the client has already established trust with the server (and vice versa) before connecting. How that trust is established is left to the implementer, usually OS-level user permissions for local servers, or standard HTTP auth (OAuth is recommended) for remote ones. Building a hardened MCP deployment for a multi-tenant SaaS still requires the same identity and access-control thinking it would for any other integration.

It does not prescribe what the model should do with a tool. A server exposes a tool. Whether the model actually calls it, in what order, with what parameters, is the model’s decision based on its training and its prompt. The protocol moves the integration. The agent logic remains in the model and the client’s prompting.

Security implications

The same property that makes MCP powerful (tools and resources are connected to the AI’s context with a single configuration step) makes it a meaningful new surface for security architects.

If you connect an MCP server with broad filesystem access to a chat client, the model now has filesystem access. If the chat client is exposed to user input that can be controlled by an attacker (see the separate piece on prompt injection), the attacker can in principle steer the model to do things with that filesystem the user did not intend. The blast radius scales with the privileges of the MCP servers configured, not with the model itself.

The practical implication: treat the MCP server’s capabilities as the privileges the AI client effectively has. A read-only database server is a contained risk. A server that can send emails, write files, or invoke shell commands is something to deploy with the same care as any other privileged automation.

How to evaluate MCP for your stack

Three questions matter when deciding whether to invest in MCP for a given project.

Are the AI clients you plan to use MCP-compatible? Most current development environments and major AI clients now are, but check the specific tool you intend to deploy.

Are the systems you need to integrate already exposed via an MCP server, either officially or by the community? If yes, the integration cost is roughly zero. If no, you are writing a new server, which is a real but bounded project.

What is the trust model between client and server? Local stdio servers have the trust model of any local process. Remote servers need the same hardening as any internal API. Get this right at design time, not after the first incident.

Further reading: Anthropic’s original launch announcement remains the cleanest one-page overview. The Model Context Protocol website is the canonical specification and SDK source, with the protocol licensed under Apache 2.0 / MIT and the documentation under CC BY 4.0. The HF blog post on MCP’s ecosystem and adoption is a good outside view on the protocol’s spread.

How we use AI and review our work: About Insightful AI Desk.