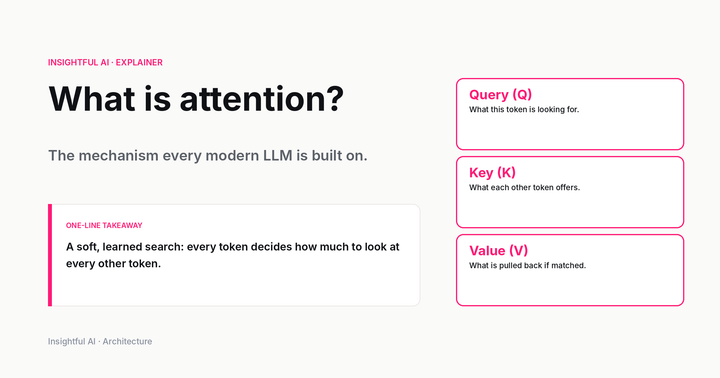

What is attention? The mechanism behind every modern LLM

The mechanism every modern LLM is built on, explained from scratch. Q/K/V, the scaled dot-product formula walked through term by term, multi-head attention, positional encoding, and the engineering variants that show up in 2026. With a live interactive lab.