What is the NIST AI Risk Management Framework?

By Maya Rodriguez, Insightful AI Desk

If your company builds or deploys AI in the United States, there is a non-trivial chance that someone — a customer, a federal contracting officer, a state regulator, or your own audit committee — will ask whether your work conforms to the NIST AI Risk Management Framework. The right answer is rarely "yes" or "no." The right answer requires understanding what the framework actually is, what conforming to it actually means, and what the consequences of not conforming are.

This post is that briefing. We walk through the framework's origins, its four core functions, what it asks organisations to do, how its August 2024 Generative AI Profile sits on top, where it is mandatory versus voluntary, and how it compares to the EU AI Act for organisations that operate on both sides of the Atlantic.

1. What the NIST AI RMF is, in one paragraph

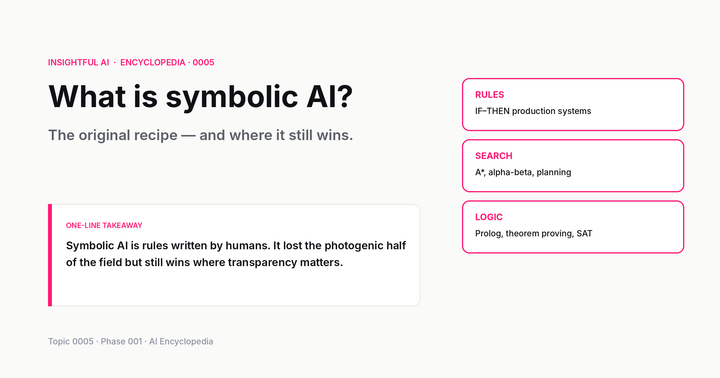

The Artificial Intelligence Risk Management Framework (AI RMF 1.0) is a voluntary guidance document published by the United States National Institute of Standards and Technology (NIST) in January 2023. NIST is a non-regulatory agency inside the US Department of Commerce. Its role across many domains — from cybersecurity (the well-known Cybersecurity Framework) to cryptography to measurement standards — is to write technical guidance that the rest of the federal government, the regulated industries, and the private sector adopt by reference rather than by statute. The AI RMF follows the same pattern: it is not a law, but it is increasingly cited as the standard of care for "trustworthy AI" in US settings.

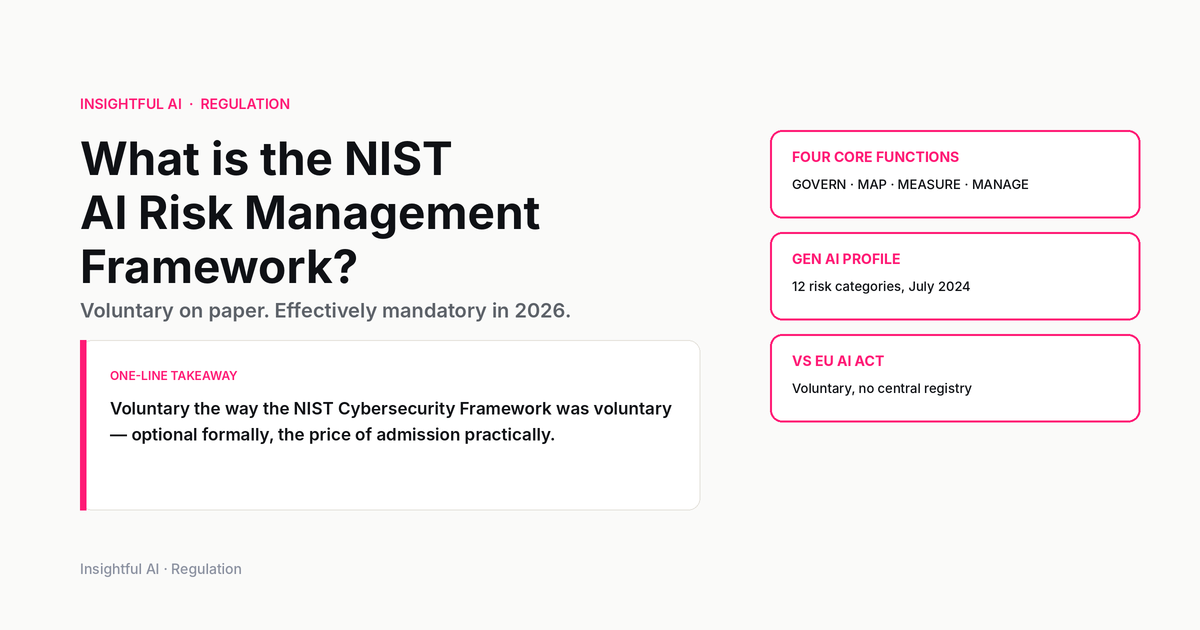

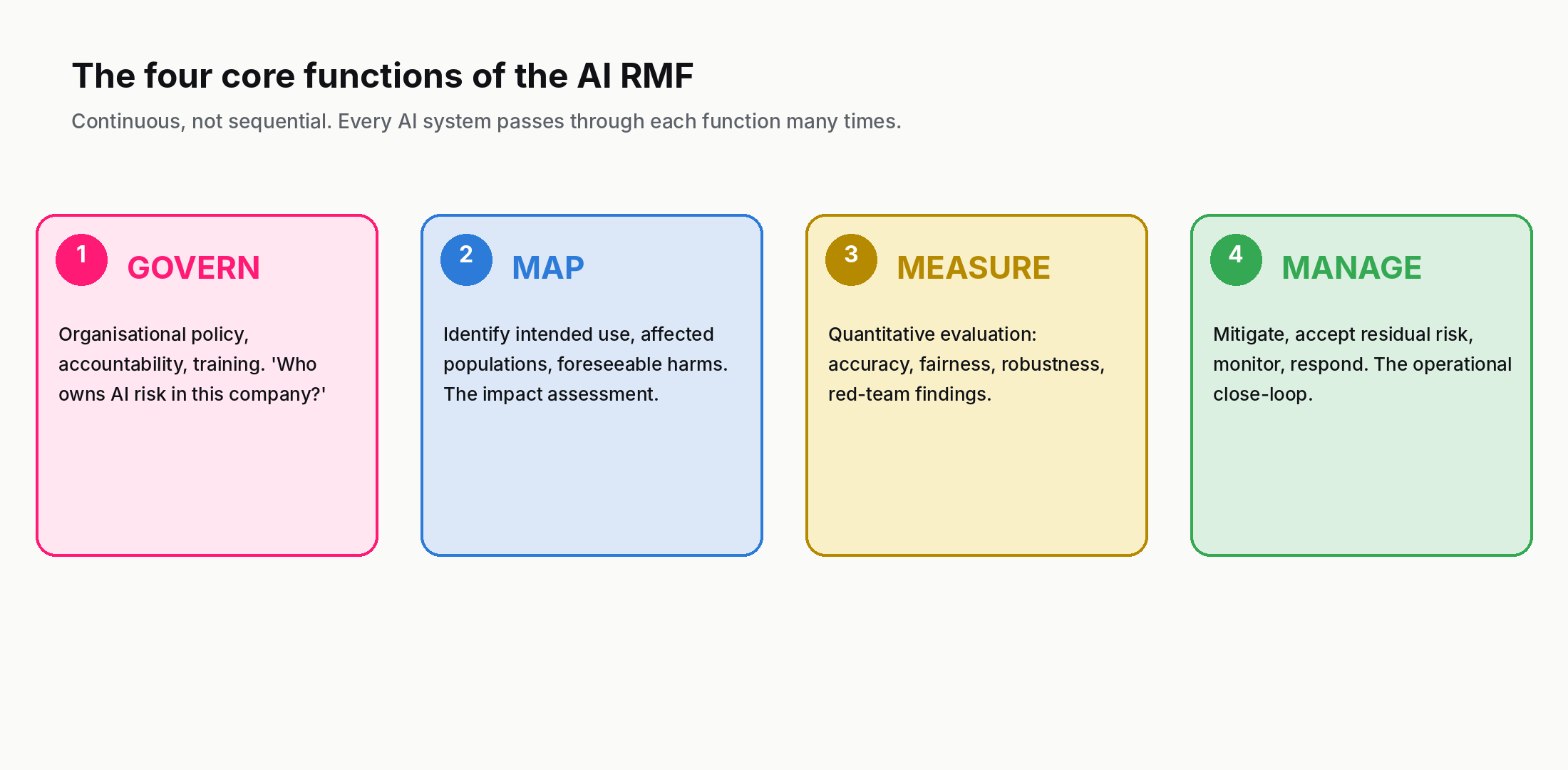

The framework was authorised by the National Artificial Intelligence Initiative Act of 2020, drafted through two years of public consultation, and is structured around four high-level functions that an organisation should perform across the lifecycle of any AI system: GOVERN, MAP, MEASURE, MANAGE. We will walk through each of these in section 3.

NIST released a major supplement in July 2024: NIST AI 600-1: Artificial Intelligence Risk Management Framework — Generative Artificial Intelligence Profile. The Gen AI Profile takes the four core functions and applies them to the specific risks of generative AI systems, including hallucination, intellectual-property leakage, CBRN information uplift, prompt injection, and harmful content generation. As of 2026, the Gen AI Profile is the document organisations most frequently reference when discussing AI RMF compliance.

2. Mandatory or voluntary — and why the answer matters

The AI RMF is formally voluntary. NIST has no rulemaking authority. There is no federal statute that says "you shall conform to the AI RMF." For most private-sector companies, the framework is a published reference, nothing more.

That said, three categories of organisation have effectively binding obligations to align with it.

Federal contractors and agencies. Executive Order 14110 ("Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence"), signed 30 October 2023, directed federal agencies to use NIST guidance on AI risk management — operationalised the following spring by OMB Memo M-24-10 (governance of agency AI use) and Memo M-24-18 (responsible AI acquisition). Although the Trump administration rescinded EO 14110 in January 2025 and signed EO 14179 ("Removing Barriers to American Leadership in Artificial Intelligence") on 23 January 2025, much of the AI RMF-aligned procurement guidance issued by OMB remained on the books through 2025–2026 while OMB worked on the revisions EO 14179 ordered. Companies selling AI to the US federal government continue to be asked, in practice, to attest to AI RMF alignment.

Companies in sectors with active regulators citing the AI RMF. The US Department of Health and Human Services, the Department of Education, the Department of Labor, and several state insurance regulators have issued guidance that points to AI RMF as the expected risk-management standard for AI in their domains. A health-system deploying an AI triage tool, for example, is operating in a domain where their state attorney general or HHS Office of Civil Rights may treat "did you align with the NIST AI RMF?" as the central question in any investigation.

Companies adopting it voluntarily for liability and contracting reasons. By 2026 the AI RMF has become a de facto checklist that large enterprise buyers attach to their AI vendor questionnaires. Saying "we are aligned with the NIST AI RMF" — and being able to point at documentation that supports the claim — is increasingly the price of admission to enterprise sales. The framework's voluntary status, in this sense, is a technicality. The market has made it mandatory.

There is a fourth, less-discussed category: companies adopting it as defensive litigation infrastructure. In the United States the standard of care that a court applies to a novel-technology dispute is shaped substantially by what reasonable industry practice looks like at the time. An AI vendor that has documented its MAP, MEASURE, and MANAGE work according to the AI RMF is positioning itself better in any future product-liability, discrimination, or negligence suit than one that has not. The framework's documentation outputs are, partly, a paper trail designed to be readable by a jury.

3. The four core functions, walked through

The substance of the framework is its four "functions." Each is a continuous activity — not a one-time gate — that organisations are expected to perform across the lifecycle of every AI system they deploy.

GOVERN. Establish organisational policies, processes, and accountability for AI risk management. This is the umbrella function — it asks an organisation to answer questions like who in this company owns AI risk decisions, what is our written AI policy, how do we train staff, what is our incident-response process when an AI system fails or causes harm. GOVERN is largely about documentation and organisational design rather than technical artefacts. It is the function that auditors look at first because its outputs are concrete documents an organisation either has or doesn't.

MAP. Identify the context in which an AI system will be used and the risks that arise from that context. MAP is the analytical step: before you build or deploy a system, you describe its intended purpose, its users, its operating environment, the data flowing in and out, the populations it affects, and the foreseeable ways it could cause harm. The output of MAP is typically an "AI use-case description" or "impact assessment" document — analogous in spirit to a data-protection impact assessment under GDPR but specific to AI risks.

MEASURE. Quantify and analyse the risks identified during MAP. MEASURE is where the technical evaluation happens: how accurate is the system across demographic subgroups, how robust is it under distribution shift, what is its calibration like, what fraction of its outputs require human review, what is its failure rate. MEASURE outputs are test reports, evaluation metrics, fairness analyses, robustness tests, and red-team findings. The Gen AI Profile elaborates this function for LLMs: hallucination rates, jailbreak resistance, prompt-injection vulnerability, IP-leakage rates, harmful-content rates.

MANAGE. Allocate resources and act on the measured risks — mitigate the high-priority ones, accept the residual ones with documented justification, monitor for change. MANAGE is the operational function: it closes the loop. The outputs include risk-mitigation plans, monitoring dashboards, incident-response playbooks, change-control logs, and the documentation an organisation produces when it decides to deploy a system with known residual risk.

The framework explicitly states that the four functions are not sequential. An organisation does not finish GOVERN and then move to MAP — all four functions are active continuously, and each AI system passes through each of them many times as it evolves.

4. The Generative AI Profile (July 2024) — what changed

The original AI RMF was written before ChatGPT's public release in late 2022, and its language is general enough to cover any AI system — classifier, recommendation engine, autonomous vehicle, predictive maintenance model. By 2024 it was clear that generative AI systems posed risks the original framework's general language did not specifically address. NIST published NIST AI 600-1: Generative AI Profile in July 2024 to fill that gap.

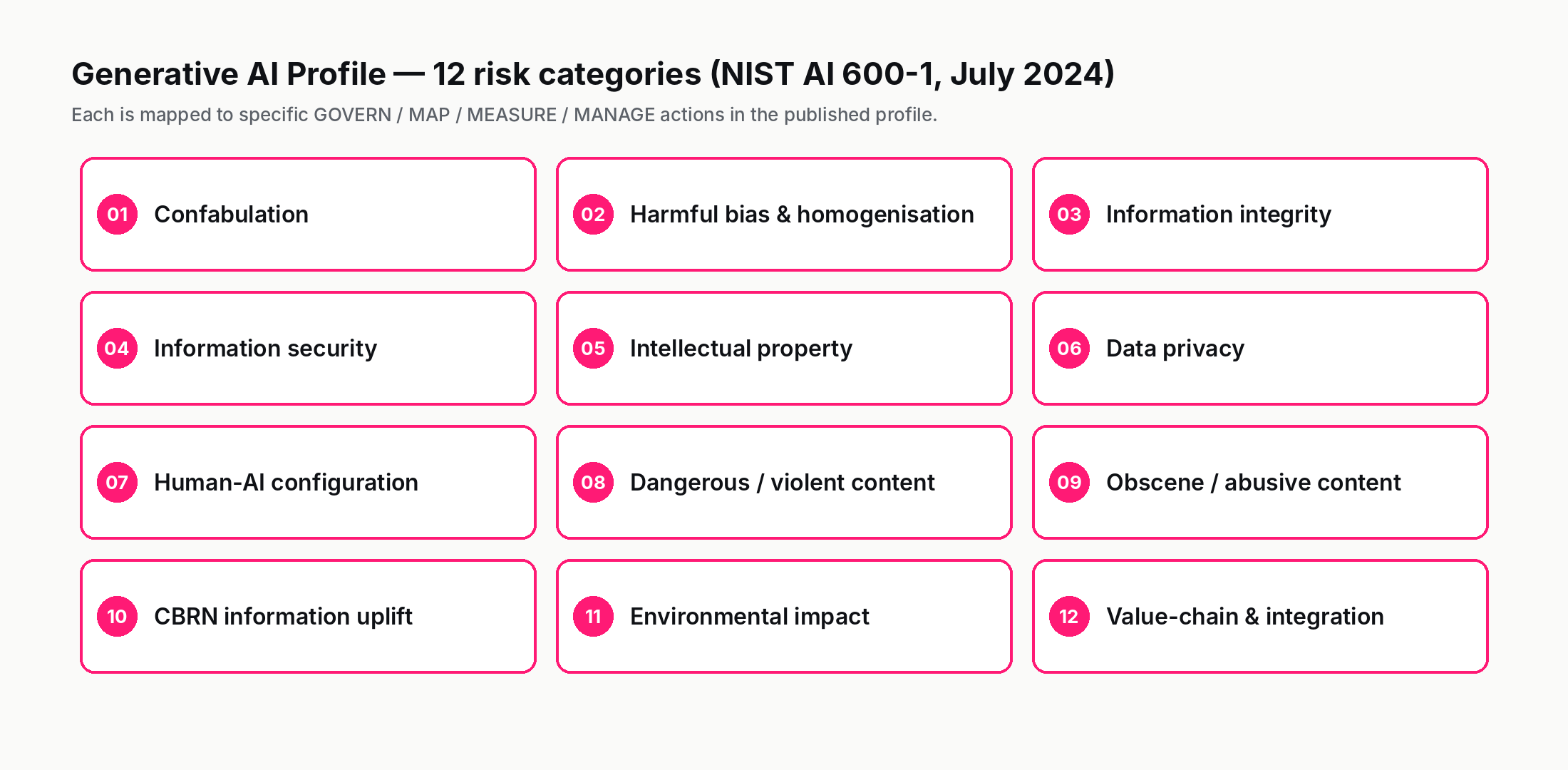

The Gen AI Profile does not replace the AI RMF. It is structured as a profile — a mapping that ties each of the four core functions to specific risks that are characteristic of generative AI. The risks identified include:

- Confabulation. The system produces fluent text that is factually wrong (the term NIST uses for what most of the field calls "hallucination").

- Dangerous, violent, or hateful content. Outputs that promote or instruct in violence, self-harm, or hate.

- CBRN information or capabilities. Outputs that could uplift a bad actor's capability to develop chemical, biological, radiological, or nuclear weapons.

- Data privacy. Including the possibility that personal data from training corpora can be extracted via clever prompting.

- Environmental impact. Energy and water footprint of training and inference at scale.

- Harmful bias and homogenisation. Output that reflects or amplifies discriminatory patterns in training data.

- Human-AI configuration. Misuse stemming from the interface design or unrealistic user assumptions about model capabilities.

- Information integrity. Deep-fakes, synthetic media, and the broader impact on what counts as a verifiable source.

- Information security. Prompt injection, model poisoning, supply-chain risk in foundation models.

- Intellectual property. Both inputs (training-data IP) and outputs (generated content that may infringe).

- Obscene, degrading, and/or abusive content.

- Value chain and component integration. The risk that comes from composing third-party models, prompts, and tools into a single system.

For each of these twelve risk categories, the Gen AI Profile lists specific actions an organisation should take under GOVERN, MAP, MEASURE, and MANAGE. A compliance team using the Gen AI Profile typically takes the form of a matrix: rows are the twelve risks, columns are the four functions, cells are the specific organisational and technical controls that NIST recommends.

5. What "AI RMF compliance" actually requires in practice

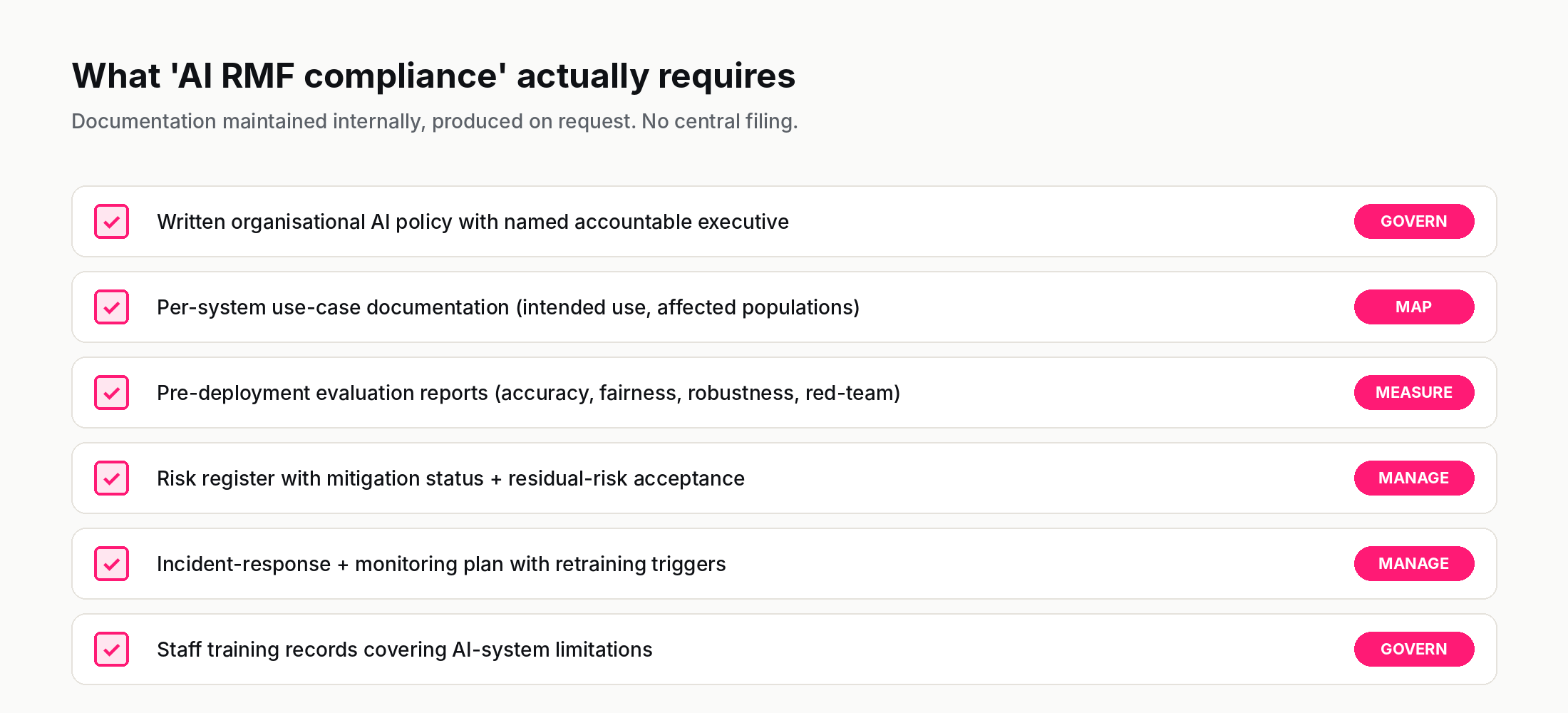

Because the framework is voluntary and structurally a guidance document, there is no auditor that issues a "NIST AI RMF compliance certificate." What organisations actually mean when they say "we are aligned with the AI RMF" is some combination of these documented practices:

- A written organisational AI policy that names the executives accountable for AI risk decisions (the GOVERN deliverable).

- Per-system AI use-case documentation, including intended use, affected populations, and foreseeable harms (the MAP deliverable).

- Pre-deployment evaluation results, including accuracy / fairness / robustness testing, and red-team findings (the MEASURE deliverable).

- A documented risk register listing identified risks, mitigation status, and residual risk acceptance, signed by a named decision-maker (the MANAGE deliverable).

- An incident-response and monitoring plan, including triggers for retraining, deactivation, or escalation (also MANAGE).

- Staff training records demonstrating that employees who interact with the AI system understand its limitations.

A second-tier vendor or a small consultancy can ship these as templates; a large enterprise typically builds them into its existing risk-management workflow (ISO 27001 or similar). The work is real but rarely deeply technical. A regulated industry adds an extra layer — for example, a clinical decision-support system would also need an FDA submission package — but the AI RMF deliverables sit alongside those, not in conflict with them.

The key distinction from EU AI Act compliance is that AI RMF documentation is not filed with anyone. There is no central registry. The documents live with the organisation and are produced on request. This is one reason the AI RMF can scale while still being voluntary: there is no agency that needs to receive and process the paperwork.

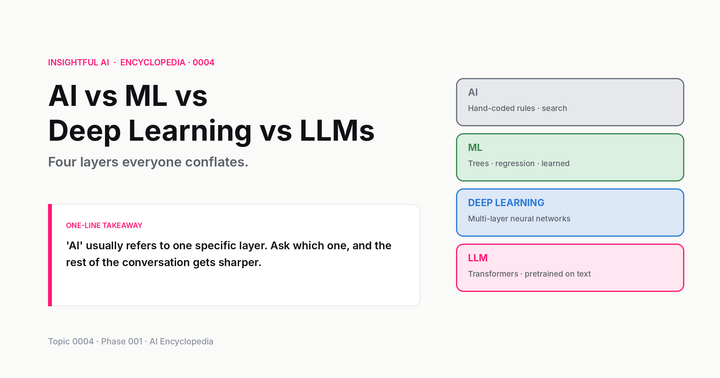

6. AI RMF vs EU AI Act — for organisations on both sides

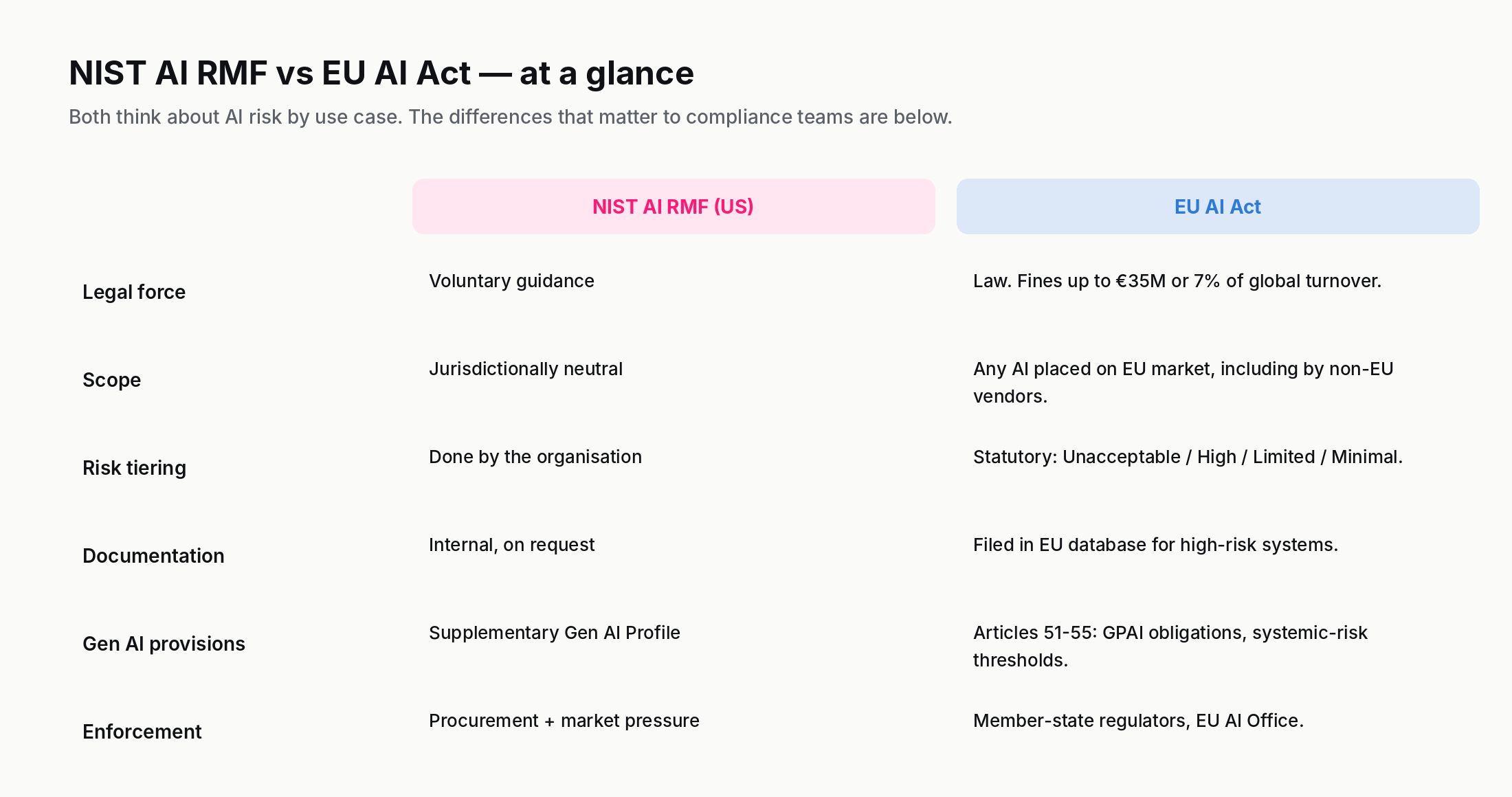

For multinational companies, the relevant comparison is to the EU AI Act, which entered into force in August 2024 and phases in over 2024–2027. The two frameworks share intellectual DNA — both think about AI risk in terms of the system's use case, not its underlying architecture — but differ on the dimensions that matter to a compliance team.

Legal force. The EU AI Act is law: violation of the prohibited-practice provisions in Article 5 can trigger fines up to €35 million or 7% of global annual turnover, whichever is higher (lower tiers apply to other obligations and to GPAI breaches). The AI RMF is voluntary guidance with no direct penalty.

Scope. The EU AI Act applies to any AI system placed on the EU market, including by non-EU companies. The AI RMF is jurisdictionally neutral; an organisation outside the US can adopt it but doesn't have to.

Risk classification. The EU AI Act statutorily classifies systems into Unacceptable, High, Limited, and Minimal risk tiers, with the obligations differing per tier. The AI RMF leaves risk classification to the deploying organisation's MAP function, with no statutory tiering.

Documentation burden. The EU AI Act requires technical documentation filed in an EU database for high-risk systems before they go on the market. The AI RMF requires documentation maintained internally and produced on request.

Generative AI provisions. The EU AI Act treats general-purpose AI (GPAI) models as a separate category with their own obligations under articles 51–55, including transparency reports for "systemic-risk" foundation models. The AI RMF approaches generative AI through the supplementary Gen AI Profile rather than statutory tiers.

The practical result for a multinational AI vendor is that EU AI Act compliance is the binding constraint (because of the legal teeth), and AI RMF documentation is the easier-to-produce US-side artefact that the same compliance team generally maintains in parallel. The two sets of deliverables overlap substantially: a well-done EU AI Act technical documentation package is roughly 70–80% reusable as AI RMF MEASURE and MAP deliverables, and vice versa.

One nuance worth noting: the EU AI Act applies extraterritorially. A US-based vendor that ships AI products to EU customers is covered by the EU AI Act regardless of where the company is headquartered. The reverse is not true — a Europe-based vendor selling only into the EU is not under any AI RMF obligation. This asymmetry means that for most internationally active AI businesses, the highest-leverage compliance investment is the EU AI Act package, with AI RMF documentation maintained as the lighter US-side complement. Teams that try to design compliance work the other way around — AI RMF first, EU AI Act adapted from it — tend to find the EU side under-specified when audit time comes.

7. Where the framework is contested

The AI RMF is broadly respected in the US compliance community but it has its critics. The most common substantive objections fall into three categories.

The "checklist failure mode." Because the framework is voluntary and verification-light, an organisation can produce all the documentation NIST recommends without meaningfully reducing the risks the documentation is supposed to address. The MAP and MEASURE functions are particularly susceptible: an organisation can write a thorough impact assessment that no decision-maker actually reads, and run an extensive evaluation suite that doesn't influence whether the system is deployed. This critique is fair but is not unique to the AI RMF — it applies to most risk-management frameworks that lack independent verification.

Genericity. Some commentators argue that the framework is too high-level to be operationally actionable for any specific AI system. NIST has addressed this partly through the Gen AI Profile and partly through the AI RMF Playbook, a supplementary document that gives concrete suggested actions for each function. Still, organisations frequently complain that the framework tells them what to do without telling them how.

Capture risk. NIST's drafting process was extensively consulted with industry, and some civil-society groups have argued that the final framework softened in places where stronger language would have been appropriate. This is a recurring criticism of voluntary-framework drafting and not unique to the AI RMF.

None of these criticisms have produced a competing US framework that organisations actually use. The AI RMF remains the de facto standard in 2026.

8. Practical: what an organisation should actually do

If you are responsible for AI risk at an organisation that has not yet engaged with the AI RMF, the right starting point is short and tractable.

First, designate an accountable executive — the GOVERN function will not get done without a single person whose role explicitly includes "AI risk." This person does not need to be technical; they do need to have the authority to require teams to participate in MAP and MEASURE activities.

Second, take an inventory. List every AI system in production, every AI system in development, and every AI capability your organisation buys from a third party. For each, capture the intended use, the affected populations, and the data flowing in and out. This is the start of MAP.

Third, pick one high-priority system from that inventory and walk it through the full four-function cycle. Treat it as a pilot: produce the MAP impact assessment, run a MEASURE evaluation, file a MANAGE risk register, and document the GOVERN decision to deploy or not deploy. The first system through this cycle is genuinely hard. The second is half as hard. The fifth is mostly templates.

Fourth, if the system is a generative AI system, walk the Gen AI Profile's twelve risk categories against your existing documentation. The Profile is short enough to read end-to-end in a sitting and concrete enough to point at specific gaps.

Fifth, design the documentation so that the EU AI Act team can reuse it when the EU AI Act's relevant tier obligations bind on your systems. This is the single highest-leverage investment for a multinational AI compliance function.

9. Where to read next

The primary source is the framework itself, which NIST hosts as a freely downloadable PDF: AI RMF 1.0. The Generative AI Profile is at NIST AI 600-1. Both documents are short — the core framework is 48 pages, the Gen AI Profile around 60 — and written in clear prose. They are unusually readable for federal-guidance documents.

The supplementary AI RMF Playbook on the NIST AI Resource Center is the operational guide most practitioners actually use day-to-day.

For the EU comparison side, our explainer on the EU AI Act covers the legislative side in plain English.

If you want the full curriculum, the entry point is the AI Encyclopedia — 130 phases, 2,600 concepts. Regulation as a topic is covered in Phase 114 (AI Ethics, Law, Policy and Society).

The one-line takeaway, if you keep one thing: the AI RMF is "voluntary" the way the NIST Cybersecurity Framework is "voluntary" — formally optional, practically mandatory for any organisation that sells AI to enterprises or to the US government.

Further reading: AI RMF 1.0 (NIST, January 2023), AI 600-1 Generative AI Profile (NIST, July 2024), OMB Memo M-24-10.

How we use AI and review our work: About Insightful AI Desk.