What is the EU AI Act? A plain-English guide to the world's first comprehensive AI law

Regulation (EU) 2024/1689 is the EU's AI Act. Here's what it bans, what it requires, what it costs to violate, and when each provision applies.

By Maya Rodriguez, Insightful AI Desk

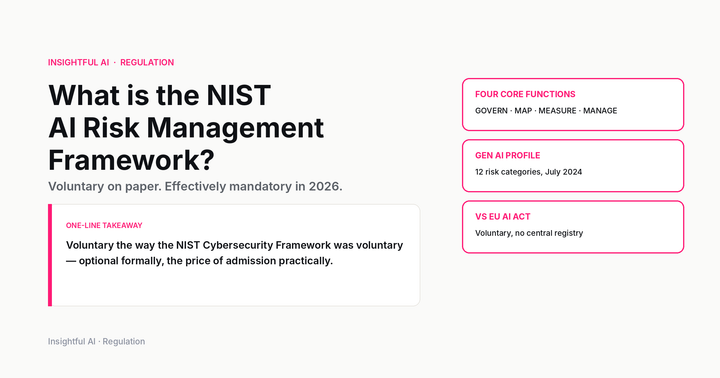

The first question any company subject to Regulation (EU) 2024/1689 has to answer is not what the AI Act requires, but where its own systems sit inside it. The regulation is built around a four-tier classification, and until a system has been placed into one of those tiers, none of the substantive obligations attach. Once it has, almost all of them are decided.

This is unusual for European product law, and it shapes everything else about how the Act is being implemented. The General Data Protection Regulation, the obvious comparator, attaches its core obligations to a single binary condition: are you processing personal data of EU residents? The AI Act asks a more granular question. Which of four risk categories does this specific system fall into? Many companies will run more than one classification in parallel. Some will find themselves in tiers they did not expect.

The Act entered into force on 1 August 2024 and is being phased in over several years. The penalties at the top of its scale sit above the GDPR’s most serious tier. Article 99, the penalty provision, sets the maximum for the most serious violations at “up to 35 000 000 EUR or, if the offender is an undertaking, up to 7 % of its total worldwide annual turnover, whichever is higher.” The architecture is built to land at board level.

What the four tiers actually contain runs the rest of the regulation.

The four-tier architecture

The European Commission’s own framing sets out the categories in order of intensity.

At the top sits unacceptable risk. Systems in this category are prohibited from being placed on the EU market at all. Article 5 of the Act enumerates the specific practices that fall into this tier. The list is short, and the prohibition is absolute, and the penalty for violation is the maximum.

Below it sits high-risk, the tier that drives most of the regulation’s document length. High-risk systems are allowed on the market, but the parties placing them there carry a substantial compliance stack: risk-management documentation, data-governance and quality requirements for training data, technical documentation, automatic event logging, transparency, human oversight, accuracy and robustness, registration in a Union database, and post-market monitoring. There are two routes by which a system ends up in this tier. One is being a safety component of a product already covered by existing EU product-safety legislation. The other is being used in one of the high-risk areas enumerated in Annex III of the Act.

Below high-risk sits transparency risk. The category covers systems that interact directly with humans, generate synthetic content, or perform emotion recognition and biometric categorisation in contexts that are not prohibited. The obligations centre on disclosure. Users have a right to know they are interacting with AI, or that content they are seeing was generated or substantially modified by AI.

Finally there is minimal or no risk, which the Commission describes as covering the bulk of AI systems on the market today. Spam filters, recommender systems, video-game AI. The Act imposes no new mandatory obligations on this tier. Voluntary codes of conduct are encouraged.

Compliance work begins not with reading the Act’s obligations but with classification. A company that knows which tier each of its systems sits in can plan a compliance programme. A company that does not has nothing to plan.

What is actually prohibited

The unacceptable-risk tier contains the most discussed and least understood provisions of the Act. The Commission’s overview lists eight prohibited practices:

- Harmful AI-based manipulation and deception.

- Harmful AI-based exploitation of vulnerabilities.

- Social scoring.

- Individual criminal-offence risk assessment or prediction.

- Untargeted scraping of the internet or CCTV material to create or expand facial-recognition databases.

- Emotion recognition in workplaces and education institutions.

- Biometric categorisation to deduce protected characteristics.

- Real-time remote biometric identification by law enforcement in publicly accessible spaces.

Each of these is defined more precisely in Article 5 than the headline suggests. Social scoring, for example, does not mean any system that scores people. The Act targets systems that evaluate or classify natural persons on the basis of their social behaviour or personal characteristics, where the resulting score leads to detrimental treatment in a context unrelated to where the data was collected, or where the treatment is disproportionate. Loyalty programmes, credit scoring governed by separate sectoral rules, and most internal HR analytics are not what this provision is aimed at.

Untargeted scraping of online images and CCTV footage to build facial-recognition databases is the provision most clearly directed at a specific class of product. The framing makes the policy interest visible: not the use of facial recognition as such, but the bulk-collection step that precedes it.

Real-time remote biometric identification by law enforcement in public spaces is the provision that was most negotiated in the legislative process. The final text permits narrow exceptions for terror-attack response, the search for specific missing persons, and the prosecution of specific serious crimes, subject to prior judicial or administrative authorisation. The default is prohibition.

The Pennsylvania litigation against an AI chatbot operator, covered elsewhere on this site, is the kind of case that, in EU jurisdiction, would now sit close to the manipulation and exploitation-of-vulnerabilities lines. Most of the eight prohibitions have analogues in pending US state legislation; the EU Act is the version that has already become law.

The high-risk tier and its compliance stack

Most non-prohibited AI systems sold into regulated sectors will be classified high-risk under one of the two routes the Act provides. The first route, product-safety integration, captures systems that function as safety components of products already covered by sector legislation: medical devices, machinery, toys, civil aviation, marine equipment, lifts, and a long list of others. The second route is use in one of the areas listed in Annex III: biometric identification of natural persons, management of critical infrastructure, education and vocational training, employment and workforce management, access to essential public and private services, law enforcement, migration and border control, and administration of justice and democratic processes.

A system that falls under either route picks up obligations across its whole lifecycle. The risk-management system has to be established and maintained as a continuous, iterative process, not a one-off documentation exercise. Training, validation, and testing data have to meet specified quality criteria. Technical documentation has to be ready before the system is placed on the market and kept current. Automatic logging has to be designed in. Transparency to deployers has to be sufficient for them to understand how the system works in the context of their use. Human oversight has to be designed into the system, not just promised. Accuracy, robustness, and cybersecurity have to be tested against the state of the art at the time. Many high-risk systems have to be registered in a public EU database before going on the market. Post-market monitoring has to continue throughout the system’s commercial life.

This is the part of the Act that most resembles existing EU product-safety legislation, and not by coincidence. The drafters worked from the New Legislative Framework that governs how products are conformity-assessed in the Union. Companies in regulated sectors will find the structure familiar even where the AI-specific requirements are new. Companies outside those sectors should expect a learning curve.

General-purpose AI: the separate track

Late in the legislative process, the Act added a parallel set of obligations for general-purpose AI models, the foundation-model layer on which most consumer AI products are built. The structure is two-tiered. All GPAI providers must publish a summary of the training data used, maintain up-to-date technical documentation, and comply with EU copyright law on the use of training material. GPAI models with systemic risk, defined by a compute threshold among other criteria, face additional obligations: model evaluations against the state of the art, risk assessment, serious-incident reporting, cybersecurity measures, and energy-consumption disclosure.

The GPAI obligations have applied since 2 August 2025. Day-to-day oversight of GPAI compliance sits with the European AI Office, a unit established within the Commission’s Directorate-General for Communications Networks, Content and Technology. The AI Office is the body that issues guidance, runs evaluations of GPAI models, and handles requests for information and corrective action from providers. Whatever the eventual texture of GPAI enforcement turns out to be, it will run through this Office.

Alongside the binding obligations, the Commission has launched the AI Pact, a voluntary instrument under which signatory companies commit to begin implementing AI Act measures ahead of their formal deadlines. The Pact is not legally binding. Over 230 organisations signed the initial pledges in September 2024, agreeing at minimum to establish an AI governance strategy, identify and map their high-risk AI systems, and promote AI awareness among staff. For companies that want a structured on-ramp to compliance without waiting for the harmonised standards process to conclude, the Pact is the operational instrument available now.

The phased rollout

The Act’s most operationally consequential feature is that its provisions take effect on different dates. The entry-into-force date of 1 August 2024 starts the clock, but most of the substantive obligations follow on a staggered schedule that the Commission lays out in its overview.

The prohibitions in Article 5 began to apply on 2 February 2025. Any AI system that falls within one of the unacceptable-risk categories had to be off the EU market by that date.

The governance rules and GPAI obligations followed on 2 August 2025. Member states were required to designate national competent authorities. GPAI providers became subject to the obligations described above.

The general date of full applicability for the remaining provisions, including most of the high-risk obligations under Annex III, is 2 August 2026. A second high-risk window, specifically for high-risk system rules in biometrics, critical infrastructure, education, employment, migration, asylum and border control, runs to 2 December 2027 per the Commission’s published timeline. An extended transition out to 2 August 2028 applies to high-risk AI systems integrated into products already covered by existing product-safety legislation, recognising that aligning two regulatory regimes for the same product takes longer.

The practical implication is that, depending on the system, a company may already be subject to enforcement (prohibitions, GPAI), or may have until 2026 or 2028 to complete its compliance work. The classification step at the front of any compliance programme is also the step that determines the deadline.

Extraterritorial reach

Article 2 of the Act extends its application beyond providers established in the Union. Non-EU providers fall within scope if their AI systems are placed on the EU market or if the output produced by their systems is used in the Union. The reach pattern is the same as the GDPR’s, and it captures most foundation-model providers regardless of where they are headquartered.

The mechanic has US counterparts. The pre-release AI testing programme run by CAISI, the new US institute working with major AI labs, sits on the opposite side of the Atlantic with a structurally different posture (voluntary, pre-deployment, agency-coordinated). For companies operating in both markets, the practical question is rarely whether either jurisdiction applies but which provisions apply, on what schedule, and where in the corporate structure legal responsibility sits.

The interpretive decade

The Act is now law. How it is enforced is the next several years of work.

Three workstreams will shape the practical answers. The GPAI code of practice, which the Commission is developing with industry and civil society, will translate the foundation-model obligations into specific compliance criteria. Harmonised standards being developed by CEN and CENELEC will do the same for high-risk obligations; compliance with a harmonised standard creates a presumption of conformity. National competent authorities, designated by each member state, will set the tone of enforcement.

By autumn 2025 the AI Office had begun issuing implementation guidance on a roughly monthly cadence. Counsel watching that cadence will read the regulator’s priorities earlier than counsel waiting for the harmonised standards to land. The cadence is, for now, the most reliable public signal of where the early enforcement attention is going.

The text of the Act is fixed. Its interpretation will not be settled for some time, and the companies that have begun their classification work first will have the shorter list of unanswered questions when the first enforcement actions come.

Further reading: the Commission’s regulatory framework for AI page is the canonical jumping-off point. Article 99 covers penalties. The full text on EUR-Lex is the primary source for any specific question. The European AI Office is the body to track for GPAI enforcement, and the AI Pact is the voluntary instrument for early-mover companies.

How we use AI and review our work: About Insightful AI Desk.