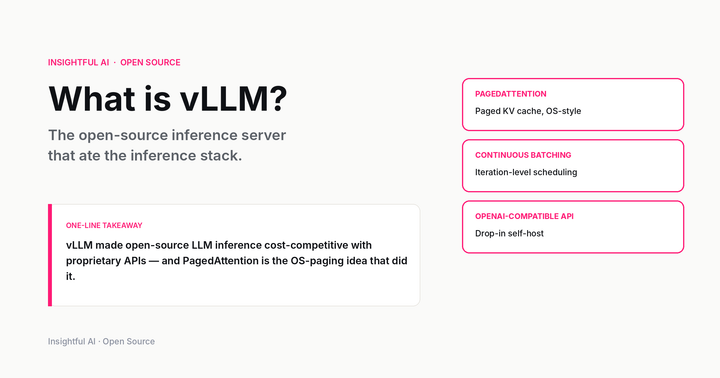

What is vLLM? The open-source inference server that ate the inference stack

The open-source inference server that ate the inference stack. What PagedAttention actually does, how continuous batching works, performance versus TGI / TensorRT-LLM / SGLang, when to pick it, and the LF AI governance that made it vendor-neutral.