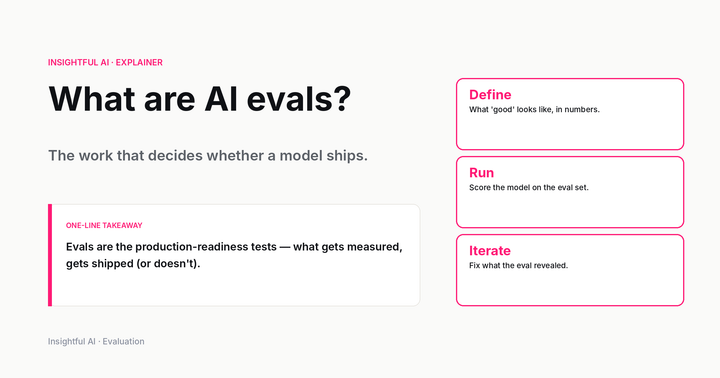

What are AI evals? The work that decides whether a model ships

Evals are how labs decide a model is ready to ship — and how buyers decide which model to buy. A plain-English guide to capability, safety, and production evals, the LLM-as-a-judge pattern, and what changed in 2026.